The Learning Curve is officially live!

Vocareum's new podcast series exploring how AI is reshaping learning and work.

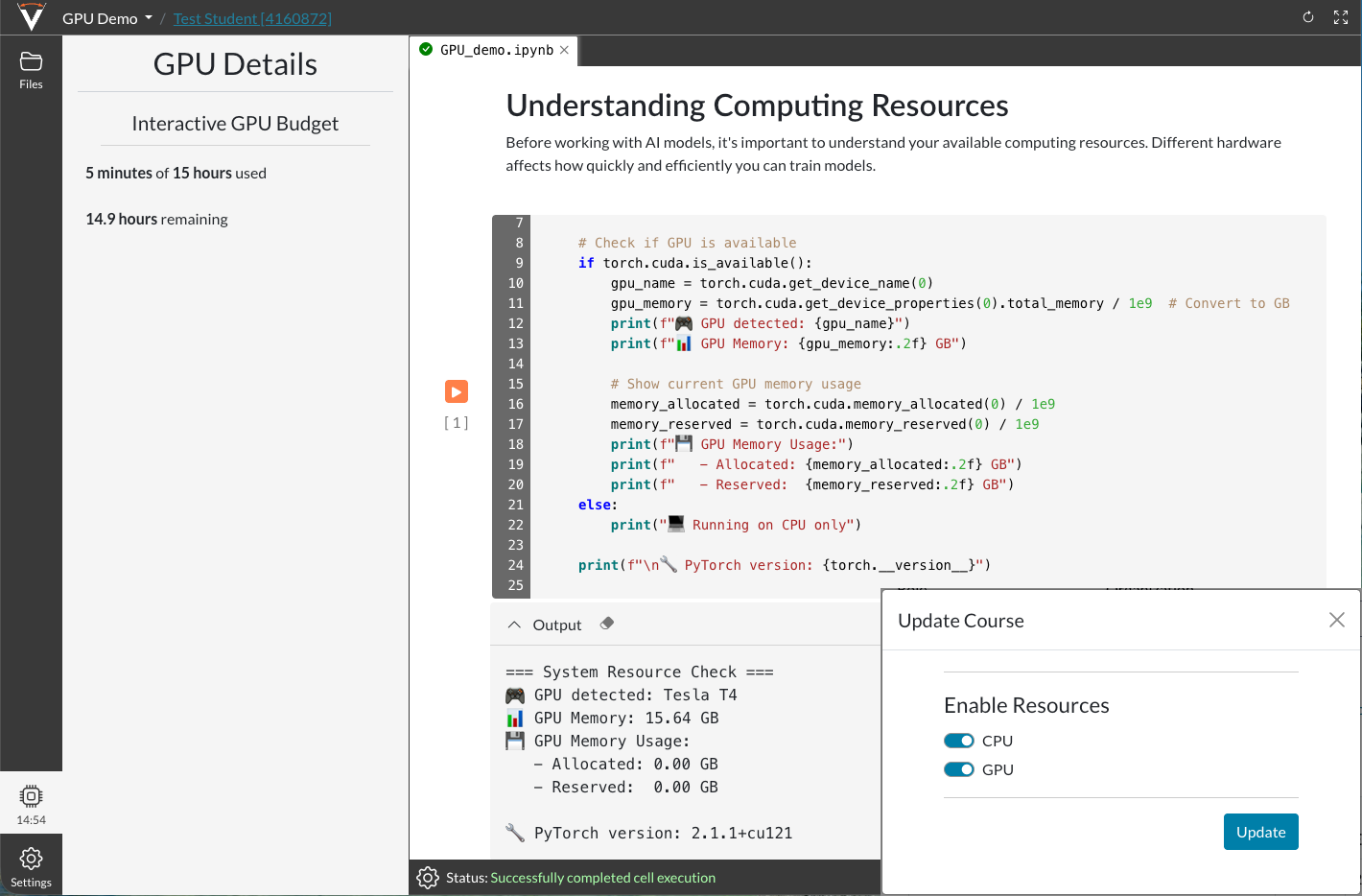

Give users across your organization access to the GPU and CPU resources they need, from everyday hands-on labs and technical training to AI development, simulation, data science, and high-performance workloads. Vocareum makes it easy to deliver scalable, browser-based, virtual, and on-prem compute environments with centralized controls, budget guardrails, and efficient resource management.

Support compute needs ranging from everyday hands-on labs and technical training to AI development, simulation, data science, and high-performance workloads, with GPU and CPU resources that scale across virtual and on-prem environments based on performance, delivery, and budget needs.

Dedicated GPU Power for Scalable Workloads

Give users access to dedicated GPU resources for AI development, model training, data science, accelerated technical labs, and other workloads that benefit from GPU performance. Scale from smaller hands-on environments to more demanding compute needs as requirements grow.

Scalable Compute for Parallel and Distributed Workloads

Support parallel and distributed workloads with high-performance compute clusters designed for simulation, data processing, technical training, and other workflows that require scalable CPU or GPU resources.

Dedicated Infrastructure for Specialized Performance Needs

Run specialized or high-demand workloads on dedicated infrastructure when performance consistency, hardware isolation, or direct control over the environment matters most.

Flexible Infrastructure for Different Delivery Models

Choose virtual or on-prem compute environments based on your security, performance, delivery, and resource requirements, with the flexibility to support everything from technical training labs to AI development and advanced compute workflows.

Scalable Compute: Support everything from everyday hands-on labs and technical training to AI development, simulation, data science, and high-performance workloads with GPU and CPU resources that scale based on demand.

Flexible Infrastructure: Dynamically allocate virtual or on-prem compute resources based on the specific needs of each lab, course, development environment, or technical workflow.

Bare Metal Options: Run specialized or high-demand workloads on dedicated infrastructure when performance consistency, hardware isolation, or direct environment control matters most.

Governed Usage: Manage access, budgets, and resource consumption with centralized controls that help deliver compute more efficiently across a wide range of workloads.

Provide browser-based GPU and CPU environments for everyday hands-on labs, technical training, and development workflows that need more flexibility than local machines can provide.

Benefits:

Support repeatable environments for technical training.

Give users access to the compute they need without local setup overhead.

Scale resources based on the requirements of each lab or course.

Support AI development, model training, experimentation, and data science workflows with scalable GPU and CPU resources that help users work with larger datasets and more demanding tools.

Benefits:

Provide GPU resources for model training and experimentation.

Support data science workflows that exceed local machine limits.

Scale from smaller development environments to more demanding workloads.

Deliver the infrastructure needed for simulation, rendering, complex modeling, and other advanced workloads that require scalable clusters, specialized hardware, or virtual and on-prem compute environments.

Benefits:

Support parallel and distributed workloads at higher scale.

Match infrastructure to specialized performance requirements.

Use virtual, on-prem, dedicated, or cluster-based environments for advanced compute needs.

Dedicated GPUs are a strong fit for AI development, model training, and other accelerated workloads that benefit from GPU processing. High-performance clusters are better suited for larger-scale parallel or distributed workloads such as simulation, rendering, and complex processing across multiple resources.

Bare metal is the better fit when workloads require dedicated hardware, stronger isolation, or more direct control over the underlying infrastructure. It is especially useful for specialized or high-demand workloads where virtualized environments may not be the ideal fit.

Yes. Different labs can be aligned to different compute requirements, whether that means CPU-based environments for everyday hands-on labs and technical training, GPU-backed environments for AI development, cluster-based compute, bare metal, or virtual and on-prem deployments.

Vocareum supports governed usage with budget and usage controls that help manage access, monitor consumption, and deliver compute environments more efficiently across a wide range of workloads.

See how Vocareum helps you provide scalable, browser-based, virtual, and on-prem GPU and CPU environments for hands-on labs, AI development, technical training, and advanced workloads with centralized controls and budget guardrails.