Give learners in workforce, certification, technical enablement, and higher ed programs preconfigured AI workspaces, model access, and real cloud targets in one secure lab environment. Train on real tools, measure real outcomes, and control cost, access, and teardown from one platform.

One lab environment can include the workspace, the agent, the live cloud target, the control layer, and the grading engine together.

Browser-based IDEs, Jupyter, terminals, AI Notebook, Windows VDI, and custom containers.

Claude, GPT, Gemini, Bedrock, Azure OpenAI, and approved agentic tooling behind one controlled access layer.

Real AWS, Azure, GCP, Databricks, databases, GPUs, HPC, and isolated networks with per-learner isolation.

Budget caps, scoped IAM, automated teardown, policy enforcement, no exposed API keys, full audit logs, and tenant isolation.

Grade code, notebooks, live deployments, agent outputs, and open-ended projects with LMS score passback.

LTI 1.3, REST API, SAML 2.0 SSO, Databricks identity support, and white-label delivery into customer workflows.

Give teams preconfigured lab environments first, then let them work with the AI tools, models, and cloud targets they already care about

No Setup Friction: Launch the exact pre-configured environment learners need without local installs or key handling.

No Budget Surprises: Meter every model call and enforce hard limits across learners, labs, and cohorts.

No Fake Practice: Use real production interfaces instead of screenshots or simplified simulators.

No Outcomes Gap: Validate whether the application actually deployed and worked in the assigned account.

Enterprise-ready Controls: SOC 2 Type II, GDPR, FERPA, and WCAG 2.1 AA support sit alongside auditability, access controls, and policy enforcement.

One Vocareum lab can combine the workspace, the agentic tooling, the controlled cloud target, and the grading system in a single lab.

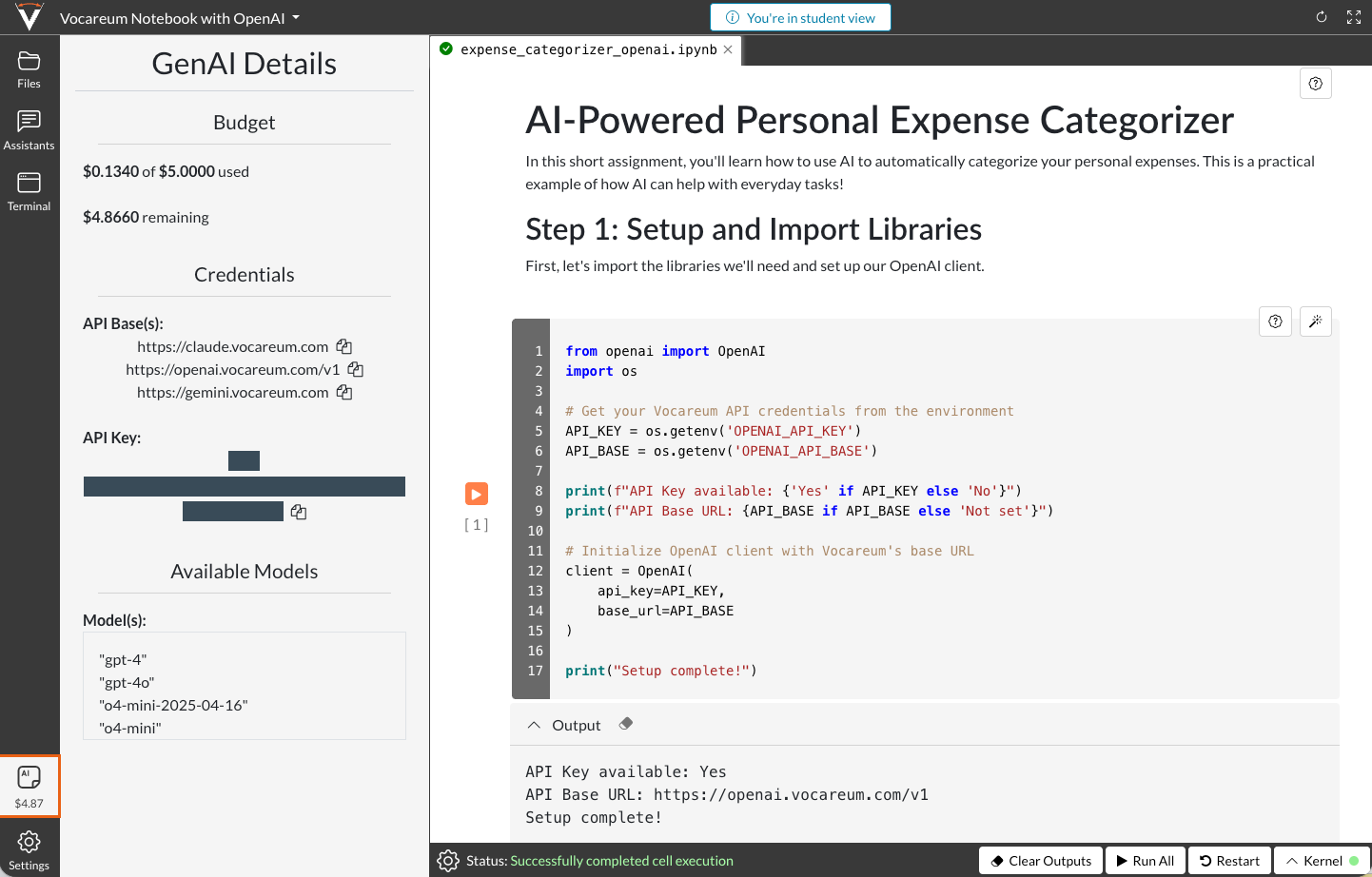

A learner launches one Vocareum lab and gets the full workflow together: a browser-based VS Code workspace, Claude Code preconfigured, controlled model access, and a real AWS account ready for deployment.

Vocareum provisions the workspace, extensions, repos, and scoped AWS account inside the lab.

Claude Code writes and revises the application through the AI Gateway, so no API keys are exposed.

The learner deploys to Lambda, API Gateway, S3, or other real AWS services under budget and IAM guardrails.

Vocareum validates the live deployment, records the audit trail, and tears resources down automatically.

A second pattern is a Databricks-centered lab where Codex and Databricks AI tooling work in the same controlled environment for notebook, data, and model workflows.

Vocareum provisions the Databricks workspace, identity path, and controlled compute as part of the lab.

Codex supports notebook and application work while Databricks services handle data, model, and workspace operations.

All model and infrastructure usage stays inside one controlled environment with metering, policy rules, and spend limits.

Vocareum grades the notebook, model output, or deployment artifact and sends results back through the delivery system.

Each lab combines preconfigured environments, controlled model access, real cloud targets, assessment, integrations, and enterprise controls in one system.

Launch browser-based IDEs, Jupyter, terminals, AI Notebook, or custom containers with zero setup.

Browser-based work environments

Persistent state when needed

Standardized builds across cohorts

Centralize access to foundation models and agentic tools without leaking keys or losing spend visibility.

No learner-facing API secrets

Metering, logging, and policy controls

One layer across Anthropic, OpenAI, Google, AWS, and Microsoft

Give learners the same interfaces they will use on the job, with controls strong enough for enterprise programs.

AWS, Azure, GCP, and Databricks

Databases, GPUs, HPC, and isolated networks

BYO cloud account support

Control cost and risk continuously, not just at login or provisioning time.

Budget caps per learner, lab, or cohort

Automated teardown and orphan prevention

IAM isolation, tenant isolation, and audit logs

SOC 2 Type II, GDPR, FERPA, and WCAG 2.1 AA support

Validate code, notebooks, live deployments, and open-ended agent outputs with instant scoring.

Custom scripts and NB-Grader

Deployment validation for real infrastructure

AI-assisted grading where appropriate

Fit into existing delivery systems instead of forcing a new learner workflow.

LTI 1.3 with grade passback

REST API and SAML 2.0

Databricks SSO and white-label delivery

Vocareum supports technical enablement, platform controls, and academic delivery with the same core lab, access control, and assessment engine.

Support learners as they move from core AI and development concepts into building, testing, and deploying agentic workflows and AI assistants. With secure, preconfigured environments and pre-integrated access to leading tools, institutions can provide a practical path from learning concepts to applying them in hands-on lab environments.

Benefits:

Train on real IDE-to-agent-to-cloud workflows

Expose the production interfaces learners will use at work

Measure whether the final deployment actually runs

Prepare learners to work with the development tools and workflows used in modern agentic AI development. Vocareum enables instant access to governed lab environments where learners can build, test, and deploy agentic AI projects without spending valuable time on setup.

Benefits:

Hard spend caps and automated teardown

IAM and tenant isolation with full audit trails

Policy enforcement for internet, APIs, and model use

Give programs and teams a secure foundation for exploring agentic AI in isolated lab environments. With sandboxed experimentation, usage governance, and zero-setup development, Vocareum makes it easier to support experimentation while maintaining control.

Benefits:

LMS grade passback and learner analytics

Auto-grading for code, projects, and deployments

Socratic tutor patterns without giving away answers

Vocareum launches preconfigured browser-based workspaces with the right tools, repos, policies, and cloud access already wired in, so learners start building immediately instead of provisioning their own environments.

Every session runs in controlled environments with per-learner isolation, scoped IAM, budget caps, policy enforcement, and automated teardown. That lets teams experiment with real tools without opening uncontrolled access.

IDEs, Jupyter, terminals, AI Notebook, Claude Code, Codex, Amazon Q, Copilot, cloud consoles, databases, GPUs, and customer-specific tooling can all be provisioned as part of the lab definition.

The AI Gateway meters and logs model calls, while the cloud layer enforces budgets, IAM scopes, teardown rules, and tenant policies. Buyers can manage prompts, model access, infrastructure use, and outcomes in one system.

Yes. Vocareum can validate code, notebooks, live deployments, agent outputs, and open-ended projects so teams measure working outcomes, not just tool usage.

Yes. Vocareum supports LTI 1.3, grade passback, REST APIs, SAML 2.0, white-label delivery, and customer-owned cloud accounts, which makes it work for universities, certification bodies, platform partners, and technical enablement teams.

Book a demo to see the platform in action, or explore our case studies to discover how Vocareum can transform your training or educational program.